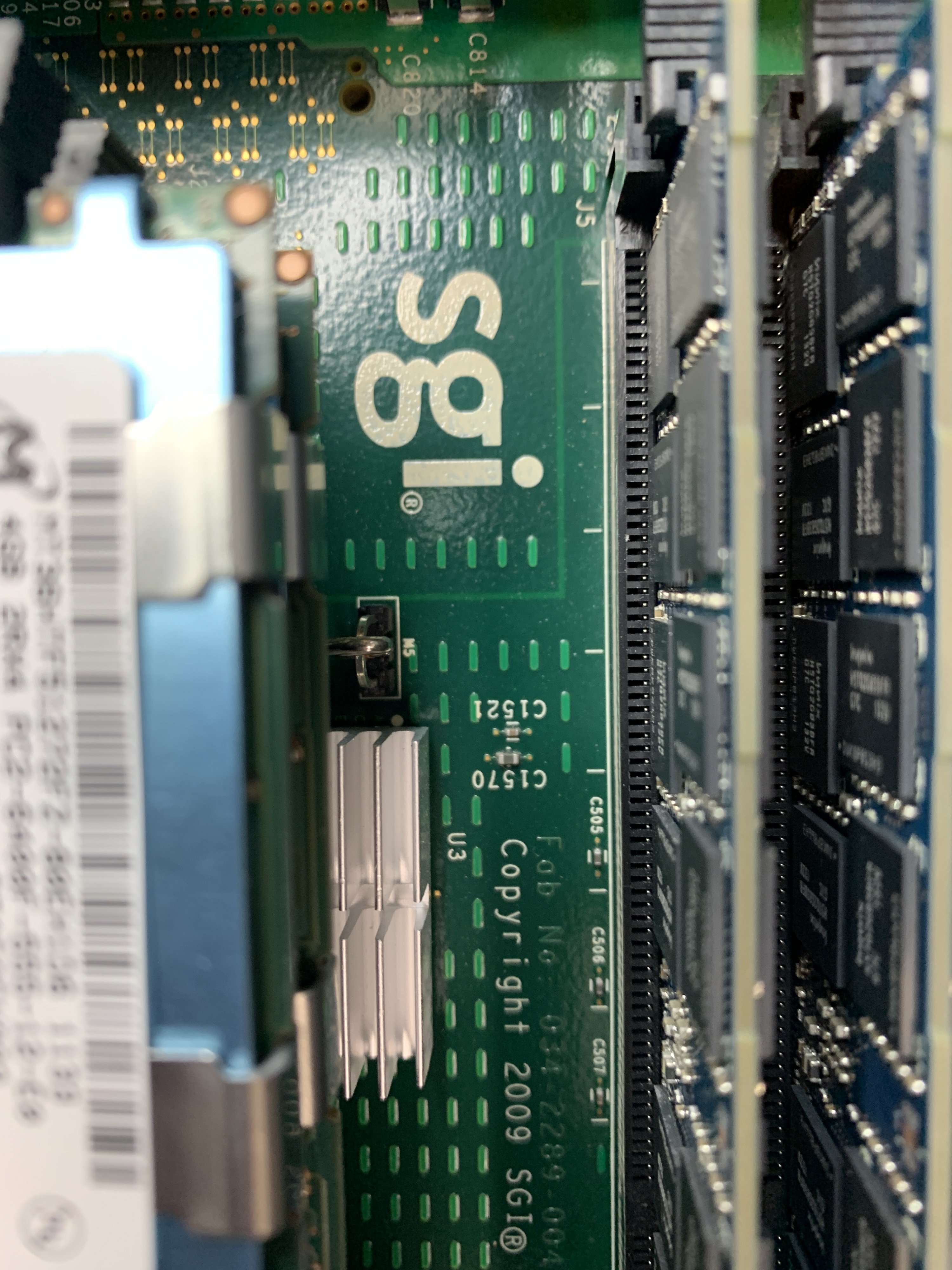

This is a look at the insides of some much later (2009) SGI hardware, based on Intel's Xeon CPUs. Recently there was a rescue that I was a part of for an Altix UV1000 cluster and I had the chance to examine a few of the nodes that are in it. At some point some of the cluster may be restored but now nothing boots.

The UV1000 is based on the Nehalem / Beckton class Xenon 7500 6-core CPU, which does not do hyper-threading.

Specs:

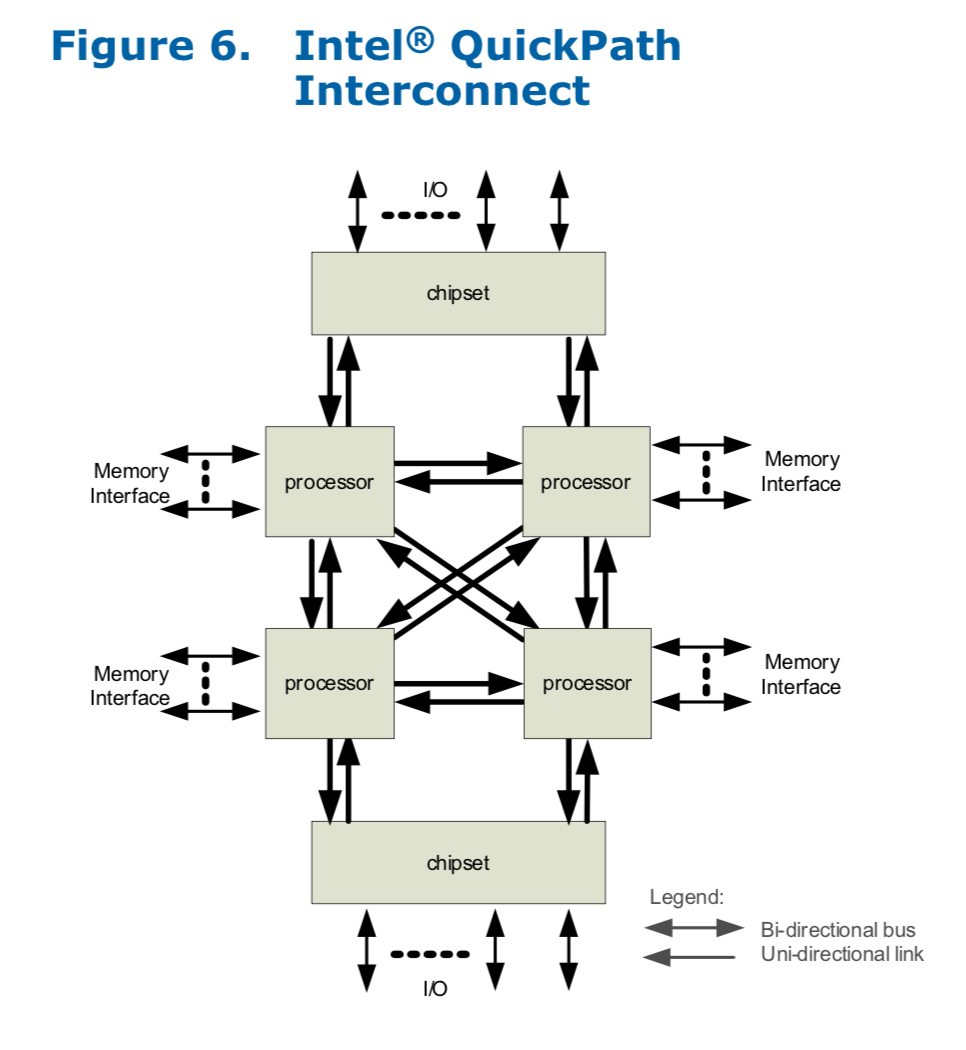

The interesting part of this chip is the multiple QPI ports that were used for the basis of the NumaLink v5 interconnect for this system.

Keeping shared state across 256 cpu sockets (1536 cores) must have been pretty amazing when it was going full-on.

Further Reading:

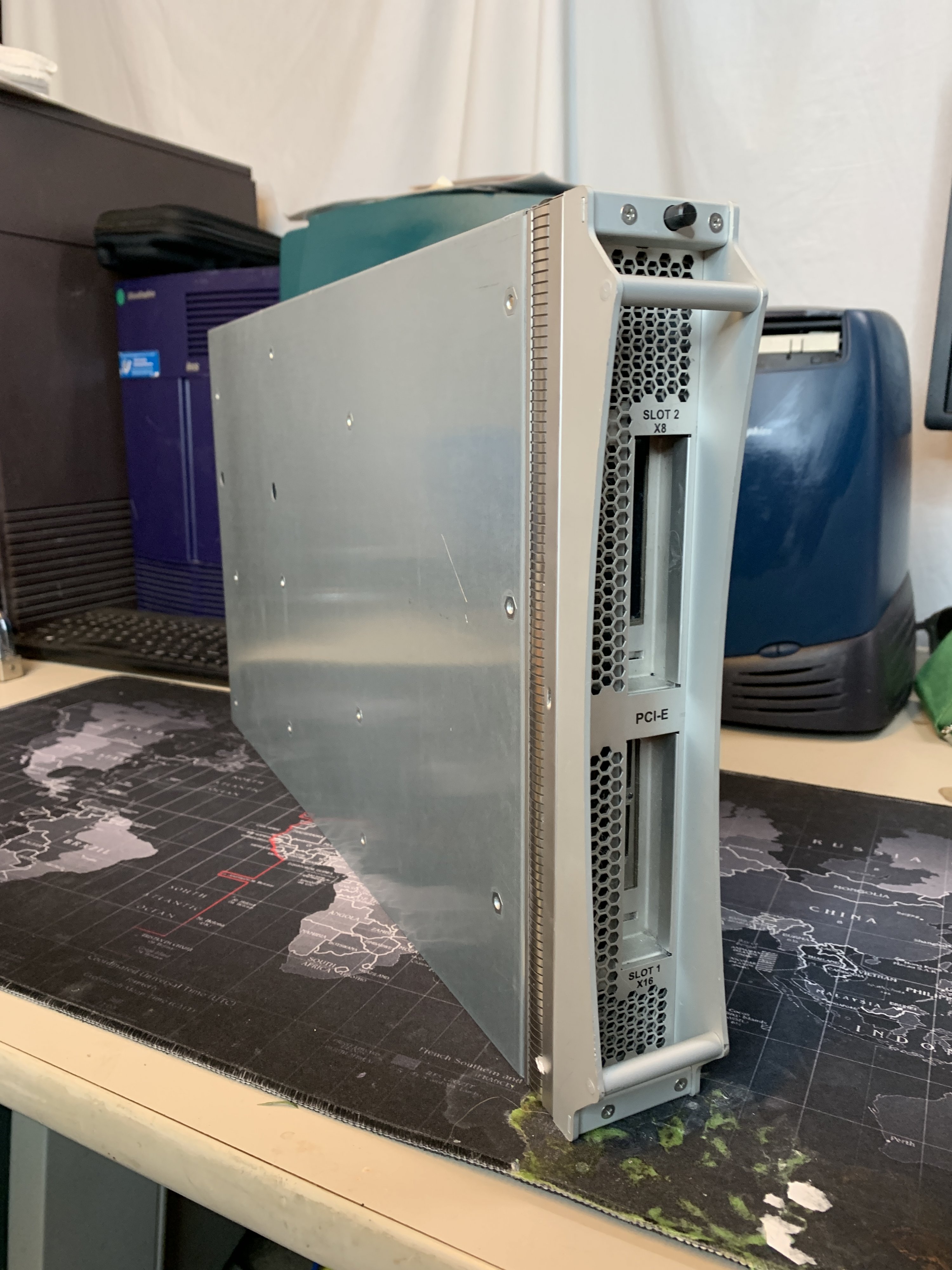

Here are some photos:

PIII, CoreDuo and Xeon 7500 cpus

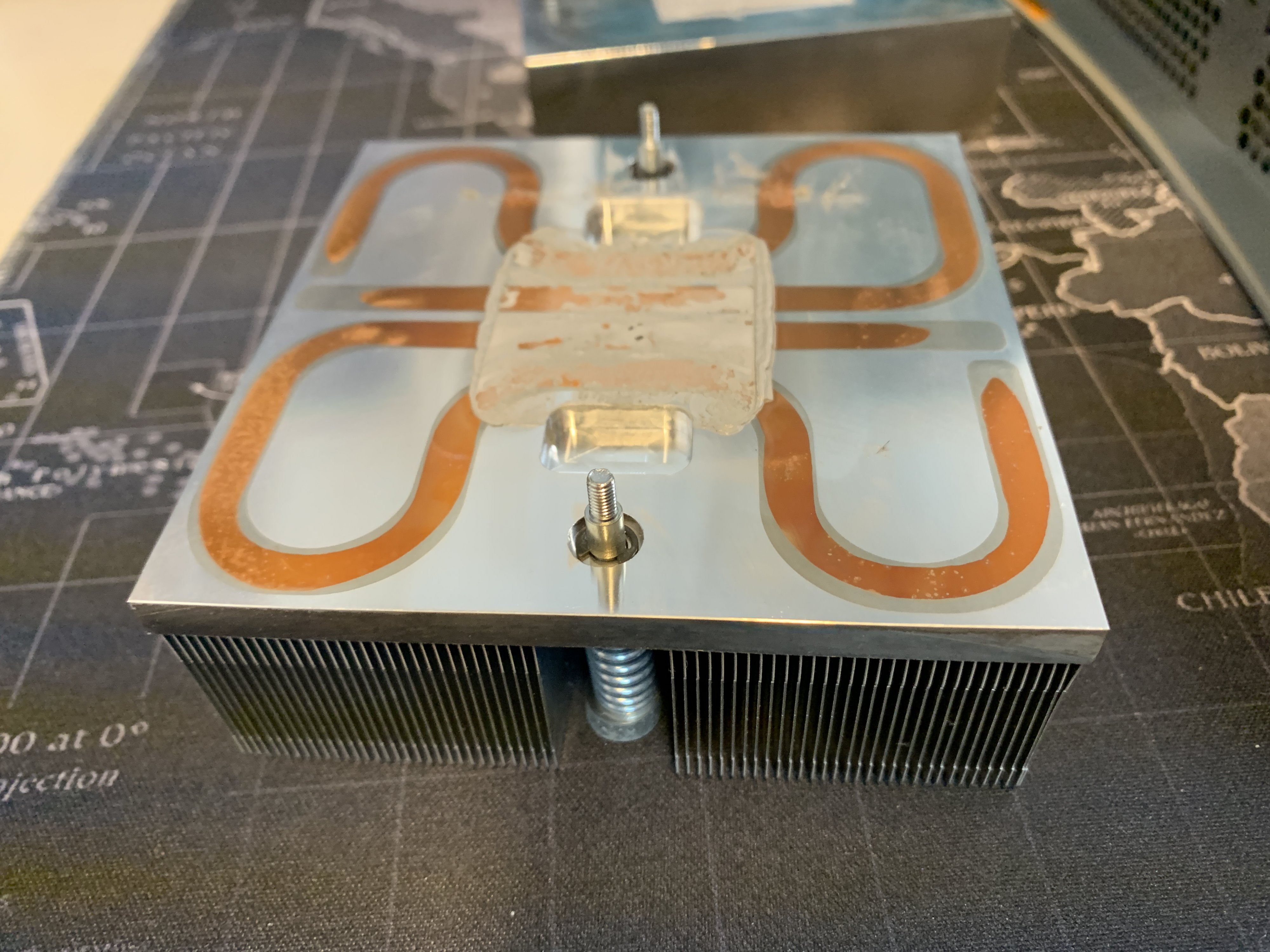

Passive cooling? They could do this because the racks had liquid chiller loops built in to aborb all that heat from the nodes.

Chillers on back back of each section of nodes. Those are giant radiators that get a cold line loop attached to remove heat.

from: https://www.intel.com/content/dam/doc/white-paper/quick-path-interconnect-introduction-paper.pdf

The UV1000 is based on the Nehalem / Beckton class Xenon 7500 6-core CPU, which does not do hyper-threading.

Specs:

- "Beckton" Nehalem CPU

- 6 Core

- 2.67 GHz/ 2.80 GHz/ 18 MB L3 Cache/ 5.86 GT/s / 130 W

- LGA1567

The interesting part of this chip is the multiple QPI ports that were used for the basis of the NumaLink v5 interconnect for this system.

The Xeon 7500 and E7 chips have four QPI ports coming off each socket, and the original UV 1000 design used two QPI ports on the Xeon 75000 or E7 chips to cross-link the two sockets together, with one of the remaining two QPI ports going to the Boxboro chipset (which controls access to main memory and local I/O slots on the blade) and the other that links out to the NUMAlink 5 hub, which in turn has four links out to the NUMAlink 5 router. That router implements an 8x8 (paired node) 2D torus that can deliver up to 16TB of shared space across those 256 sockets.

from: https://www.theregister.co.uk/2012/06/19/sgi_uv_2000_xeon_super/ (worth the read)

Keeping shared state across 256 cpu sockets (1536 cores) must have been pretty amazing when it was going full-on.

Further Reading:

- https://www.theregister.co.uk/2012/06/19/sgi_uv_2000_xeon_super/

- https://www.intel.com/content/www/us/en/io/quickpath-technology/quickpath-technology-general.html

- https://ark.intel.com/content/www/us/en/ark/products/46497/intel-xeon-processor-x7542-18m-cache-2-66-ghz-5-86-gt-s-intel-qpi.html

- https://en.wikipedia.org/wiki/Nehalem_(microarchitecture)

- https://en.wikipedia.org/wiki/Altix#Altix_UV

Here are some photos:

PIII, CoreDuo and Xeon 7500 cpus

Passive cooling? They could do this because the racks had liquid chiller loops built in to aborb all that heat from the nodes.

Chillers on back back of each section of nodes. Those are giant radiators that get a cold line loop attached to remove heat.

The Intel® QuickPath Interconnect is a high- speed point-to-point interconnect. Though sometimes classified as a serial bus, it is more accurately considered a point-to-point link as data is sent in parallel across multiple lanes and packets are broken into multiple parallel transfers.

from: https://www.intel.com/content/dam/doc/white-paper/quick-path-interconnect-introduction-paper.pdf

Last edited: